NVIDIA puts its head in the clouds

NVIDIA announced some new technology today that is supposed to bring console-like quality and speed to cloud gaming.

Today at the 2012 NVIDIA GPU Technology Conference (GTC), NVIDIA took the wraps off a new cloud gaming technology that promises to reduce latency and improve the quality of streaming gaming using the power of NVIDIA GPUs. Dubbed GeForce GRID, NVIDIA is offering the technology to online services like Gaikai and OTOY.

The goal of GRID is to bring the promise of "console quality" gaming to every device a user has. The term "console quality" is kind of important here as NVIDIA is trying desperately to not upset all the PC gamers that purchase high-margin GeForce products. The goal of GRID is pretty simple though and should be seen as an evolution of the online streaming gaming that we have covered in the past–like OnLive. Being able to play high quality games on your TV, your computer, your tablet or even your phone without the need for high-performance and power hungry graphics processors through streaming services is what many believe the future of gaming is all about.

GRID starts with the Kepler GPU – what NVIDIA is now dubbing the first "cloud GPU" – that has the capability to virtualize graphics processing while being power efficient. The inclusion of a hardware fixed-function video encoder is important as well as it will aid in the process of compressing images that are delivered over the Internet by the streaming gaming service.

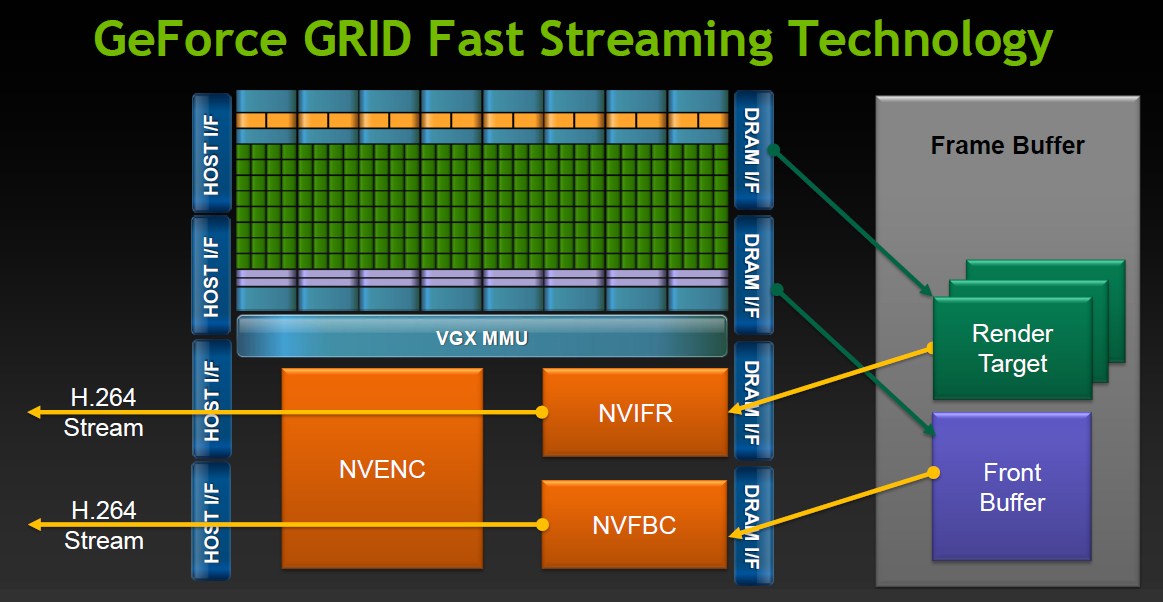

This diagram shows us how the Kepler GPU handles and accelerates the processing required for online gaming services. On the server side, the necessary process for an image to find its way to the user is more than just a simple render to a frame buffer. In current cloud gaming scenarios the frame buffer would have to be copied to the main system memory, compressed on the CPU and then sent via the network connection. With NVIDIA’s GRID technology that capture and compression happens on the GPU memory and thus can be on its way to the gamer faster.

The results are H.264 streams that are compressed quickly and efficiently to be sent out over the network and return to the end user on whatever device they are using.

Continue reading our editorial on the new NVIDIA GeForce GRID cloud gaming technology!!

On the client side, GeForce GRID comes into play again to decompress the H.264 stream and then render the final image to be displayed on the device. While this will obviously work on the PC and laptop side of things, there are still questions on whether GRID technology is necessary on the consumer end of phones, tablets and even TVs. Will NVIDIA require Tegra-based tablets and smart phones in order to take advantage of the streaming services with GRID–and does that mean that we will see NVIDIA-powered TVs in the near future?

UPDATE: The answer is no, it does NOT require NVIDIA technology on the decode side and in fact NVIDIA CEO Jen-Hsen Huang said "just about any decent H.264 decoder will work."

The primary benefit of GeForce GRID technology is that it is supposed to lower the latency between rendered images and the time the user sees them–and how soon they can respond to them. The lower bar on this graph, provided by NVIDIA, represents what a typical user’s latency would be on a "console gaming system." The times here are pretty vague, though the 100 ms game pipeline and the 66 ms display latency seem somewhat reasonable.

The middle bar represents the first generation of streaming gaming – including services like OnLive – that I have discussed (and berated) in the past. Total latency in this case has gone from 166 ms from game time to display time all the way up to 286 ms with addition of 30 ms of capture and encoding time, 75 ms of network latency and 15 ms of decode latency on the client side.

NVIDIA GRID aims to bring the total latency UNDER that of current generation consoles. You still have latencies for capture, network and decode, though they are noticeably lowered. Game pipeline time is cut in half thanks to the performance of Kepler GPUs, and with the GPUs ability to quickly pass information from the frame buffer to the encoders. Capture and encode has gone from 30 ms down to 10 ms with the fixed function NVENC (hardware video encoder) unit on the Kepler graphics units. Decode time is also decreased on the client side with NVIDIA technology and even network latency is reduced from 75 ms to 30 ms.

Honestly, the only timing reduction I have a problem with is the network latency–how NVIDIA plans to solve the problems of physics involved with network infrastructures needs to be proven. The idea of "estimations" or "predictions" can work with some game types better than others, but I think hardcore gamers are going to skeptical until proven otherwise. The only real answer is to build out more data centers to make sure there is one withint a reasonable distance to the consumer.

I’m sure that the reason they

I’m sure that the reason they posted a decreased network latency timer is because they have some sort of more efficient encoding technology that allows the H.264 video to be even smaller in size, which means lower download times, and less network latency. I doubt they meant overcoming the current bandwidth constraints.

Fair enough – though a ping

Fair enough – though a ping time is a ping time.

Keep in mind that can’t do much fancier on the encode because they had admitted to being able to use “just about any H.264 decoder” on the receiving end.

True and true. But ping on a

True and true. But ping on a connection (I’m sure they’re taking into account the best of the best for internet connections) would probably range between 10 and 25ms. I’m thinking that the time it takes to transfer high quality H.264 video is more substantial than the ping delay, which is why better compression would make sense to me.

I was really just trying to rationalize their statistic there. Otherwise, like you guys said, it would be ridiculous for them to claim that they can somehow make the infrastructure better.

Thanks for the reply by the way. It’s nice to see you guys in the comments section. Love the site and the podcast.

I’ll have to admit, this is

I’ll have to admit, this is both innovative and terrifying news on the gaming front.

If this takes off, there will be no need for massive gaming computers anymore. It’s kind of scary.

I mean, gaikai has leagues lower latency than does onlive. But this is going to give pause to developers who might want to treat cloud gaming as a passing fad.

I, incorrectly, made a prediction in 2008 about the future of gaming. I thought then that a linux live disc would be the future. I was terribly wrong on that front.

Personally, I am interested

Personally, I am interested in a different use case. I am currently learning 3d modeling, and have been using Mudbox on my PC. I would ideally like to use a tablet optimized version, say on my Kindle Fire, to allow me to mold the model with my hands. Using this cloud could give me that, and the latency issues may not matter so much.

NVIDIA talked about that use

NVIDIA talked about that use case pretty specifically, with standard GPU virtualization as an option called NVIDIA VGX. Could be VERY powerful for development!

How can a reviewer say 100ms

How can a reviewer say 100ms + 66ms is reasonable for any console?

Typical gaming latencies.

Lan 10ms

Same Coast 30ms

Half US 60-70ms

Across Atlantic +80-100ms

Across Pacific +100-120

Anything else FORGETOBOTUIT.

Please keep in mind that the

Please keep in mind that the 100ms from “game time” is there for you even when you are playing on a local display. It is not “latency” in the typical way you are thinking.

something very promising and

something very promising and will also help is Gaikai’s non-linear downloads:

http://gamasutra.com/view/news/168734/Gaikai_adds_rapid_downloads_to_cloud_game_arsenal.php

maybe they can even get the devices to do part of the processing to counter even more the latency.

This is all very cool, but I

This is all very cool, but I for one will need to see it work before I have an opinion. IN THEORY streaming games rendered on the cloud should be quite do-able, maybe not “twitch” mmpfps, but there should be a lot of games that can be handled this way, HOWEVER…

I have been “hurt” before. ONLIVE was sooooooo exciting as a concept, we all waited on pins and needles for it’s arival, and we all know how that turned out.

Time will tell, if this does work as well as they say, it may change gaming forever. Time will tell