GK106 Completes the Circle

NVIDIA completes the Kepler release cycle with the the GeForce GTX 660 2GB card utilizing the GK106 GPU.

The release of the various Kepler-based graphics cards have been interesting to watch from the outside. Though NVIDIA certainly spiced things up with the release of the GeForce GTX 680 2GB card back in March, and then with the dual-GPU GTX 690 4GB graphics card, for quite quite some time NVIDIA was content to leave the sub-$400 markets to AMD's Radeon HD 7000 cards. And of course NVIDIA's own GTX 500-series.

But gamers and enthusiasts are fickle beings – knowing that the GTX 660 was always JUST around the corner, many of you were simply not willing to buy into the GTX 560s floating around Newegg and other online retailers. AMD benefited greatly from this lack of competition and only recently has NVIDIA started to bring their latest generation of cards to the price points MOST gamers are truly interested in.

Today we are going to take a look at the brand new GeForce GTX 660, a graphics cards with 2GB of frame buffer that will have a starting MSRP of $229. Coming in $80 under the GTX 660 Ti card released just last month, does the more vanilla GTX 660 have what it takes to replace the success of the GTX 460?

The GK106 GPU and GeForce GTX 660 2GB

NVIDIA's GK104 GPU is used in the GeForce GTX 690, GTX 680, GTX 670 and even the GTX 660 Ti. We saw the much smaller GK107 GPU with the GT 640 card, a release I was not impressed with at all. With the GTX 660 Ti starting at $299 and the GT 640 at $120, there was a WIDE gap in NVIDIA's 600-series lineup that the GTX 660 addresses with an entirely new GPU, the GK106.

First, let's take a quick look at the reference card from NVIDIA for the GeForce GTX 660 2GB – it doesn't differ much from the reference cards for the GTX 660 Ti and even the GTX 670.

The GeForce GTX 660 uses the same half-length PCB that we saw for the first time with the GTX 670 and this will allow retail partners a lot of flexibility with their card designs.

One very impressive aspect (once you see the performance at least) is that the GTX 660 only requires a single 6-pin power connector, though slightly blurred in our highly artistic photo above. This allows the GTX 660 to find its way into more systems with smaller power supplies and smaller chassis.

The output configuration remains the same though – a pair of dual-link DVI outputs, full-size HDMI and full-size DisplayPort. Still the best configuration available in my book.

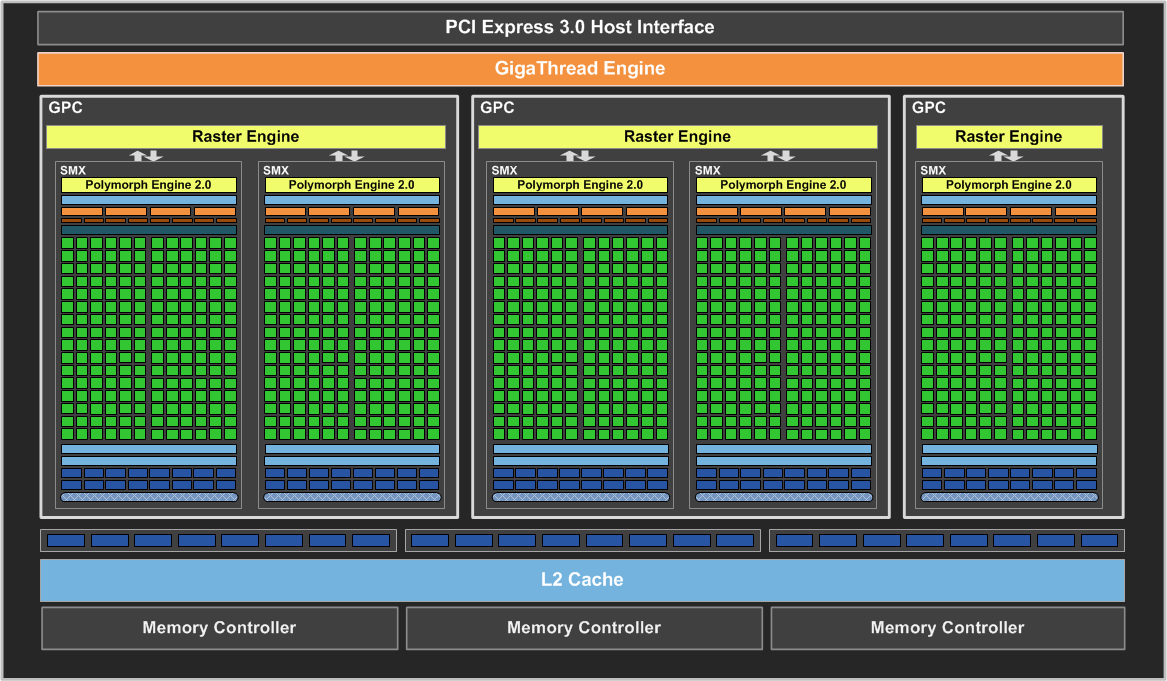

If you have seen the other Kepler block diagrams in previous reviews you will immediately recognize that the GK106 GPU is significantly smaller than GK104. The GTX 660 GPU consists of 960 CUDA cores, a drop from the 1344 cores found in the GTX 660 Ti. Texture units on the GPU drops from 112 to 80 on the lower cost product. ROP count is static with a count of 24 and as you would expect, a 192-bit memory bus to go along with it.

Once again, even though the GTX 660 uses a 192-bit memory bus, the graphics card will ship with 2GB of frame buffer made possible by supporting mixed density memory modes. Essentially, one of the three available 64-bit memory controllers will have access to a full 1GB of memory while the other two access 512MB each.

You might be wondering why the GPU seems unbalanced – NVIDIA claims that the GTX 660 is using the FULL GK106 GPU. The SMX on the far right of the block diagram does look a bit lonely without a partner, and my initial guess was that there was another SMX on the die that remained unused. NVIDIA says otherwise – so let's just move on.

The table above gives us the rest of the important specifications for GK106 and the GTX 660 graphics card. You can see that while memory bandwidth stays the same at 144.2 GB/s the texture filtering rate does drop to a 78.4 GigaTexels/s.

Die size on the new GK106 shrinks quite a bit along with the transistor count – going from 3.54B on GK104 to 2.54B.

The base clock speed of the GTX 660 is 980 MHz with a typical Boost clock of 1033 MHz – the memory data rate continues to hit the 6.0 GHz mark. This is actually HIGHER than the GTX 660 Ti that had a base of 915 MHz and a Boost clock of 980 MHz.

Thermal design limits only drop by about 10 watts according to NVIDIA which is a bit surprising considering the smaller die size and the move to a single 6-pin power connection. Even before putting the new GeForce GTX 660 2GB card in our test bed we were starting to get a feeling that it could perform quite closely to the GTX 660 Ti…

One thing that NVIDIA was pushing with this release is the idea of the upgrade cycle and what kind of benefit users that are still on the GeForce GTX 460 or even the 9800 GT would see with this new card and GPU.

According to NVIDIA's numbers a user that is still gaming with the 9800 GT would see performance increase by more than a factor of four, while also getting new features of DX11 like tessellation and Shader Model 5.0. Because of this interesting angle, I decided to include some testing with a legacy 9800 GT, GTX 460 1GB and even a GTX 560 Ti so that you can more easily see how the performance of the GTX 660 compares to what YOU have in your PC today.

This is the true sweet spot

This is the true sweet spot gamers have been looking for, even NVIDIA in its own documents compares it to other greats (9800GT/ect).

I also really do like the review including legacy cards, gives a really nice comparison, and not just comparing to what is currently available on the market.

Yup, I noticed that

Yup, I noticed that comparison as well. I don't remember exactly how 9800GT compares to 8800GT (were they exactly the same, just a rebrand?) but the latter was a card that sold like hotcakes :).

Yeah, the 9800 GT was a

Yeah, the 9800 GT was a rebranded 8800 GT, most of the time all that was done was a slight clock increase, later versions included a die shrink (65nm -> 55nm).

The 8800 GT and its bigger brother 8800 GTS 512 MB (which was rebranded to be the 9800 GTX), really should never have been. I think NVIDIA launched them just in time for the holiday season that year, they were only on the market for about 3 months before they were rebranded. >.< But never the less, the 8800GT's/9800 GT's were an insanely popular gaming card, and rightfully so I think.

I loved my 8800GTS 512!

I loved my 8800GTS 512! Nvidia did some really confusing stuff back then! Remember this?

8800GTS 640gb, 8800GTS 320gb, 8800GTS 512mb (g92).

The 512mb was way faster than the other 2.

Then the 8800gts 512mb was rebranded as the 9800GTX (mentioned as ).

Then rebranded as the 9800GTX+ (was it 45nm then? cant remember)

Then rebranded as GTS 250.

What a trip!

Haha, yes I do remember the

Haha, yes I do remember the fun with GeForce 8 branding/marketing back then.

Yup, there is alot I could go into about those cards (Ive been a computer hardware geek since the GeForce 3 Days, and video cards are my fav parts, lol).

Yes the 9800 GTX was rebranded to be the 9800 GTX+ which was the die shrink from (65nm -> 55nm).

45nm was skipped on Video Cards/GPU’s mainly due to area density/manufacturing timing issues.

So that same G92 core went from 8800 GTS 512 MB -> 9800 GTX -> 9800 GTX+ -> GTS 250 ! How time flys ^.^

Thanks for the trip down

Thanks for the trip down memory lane with the 9800GT. Identical to the earlier 8800GT except for a BIOS flash for that fancy 9 Series number.

Best GTX 660 review period,

Best GTX 660 review period, thank you for your hard effort.

Thanks for your feedback

Thanks for your feedback everyone. Let me know if you have any questions or suggestions!

At the risk of asking you

At the risk of asking you guys to do more work ^.^, but in these video card reviews, can you include a few more video cards as comparison sake?

Rather than only the respective cards immidiate competition or siblings?

Say in this case, adding a GTX 670, GTX 680, HD 7970, HD 7950, GTX 580, GTX 570, ect. Seeing how cards compete across and inside generations is really nice/helpful. And, unless the testbed has changed, you could almost imput the benchmarks from previous reviews (though a “quick” re-run of the cards with updated drivers would be great).

Id be happy to help ;), lol.

No way in hell would skyrim

No way in hell would skyrim bring a gpu to 27 fps min at 1680

fix your fucking test rigs damnit.

It happens during a scene

It happens during a scene transition – going from a town to the overworld.

then your “Minimum” result is

then your “Minimum” result is fundamentally WRONG.

They aren’t wrong, they

They aren't wrong, they represent the system as a whole anyway.

are you guys using a older

are you guys using a older version of Skyrim for the tests?

Skyrim until patch 1.4 (I think) was much worse in terms of CPU performance, since all cards are doing 27 min this can only be caused by something else.

Latest Steam updates…

Latest Steam updates…

The transitions scenes appear

The transitions scenes appear to be capped at 30fps, and aren’t part of gameplay. Including them in a benchmark for a videocard doesn’t seem very illuminating.

I will be refreshing when

I will be refreshing when people learn how FRAPs works, and why there are dips in the charting as a result of cut scenes. It will do it on any system. And while…no, it isnt particularly illuminating on benchmark data…it is a necessity that all cards are shown on the same play through, with the same cut scenes, and the same corresponding dips. Please get off your high horse.

One thing that i will take issue with however, is the Anti Aliasing settings. I am discouraged that nVidia reviews consistently limit the AA to a paltry 4x. And usually its MSAA not FSAA. It is pretty common knowledge that high levels of AA on nVidia cards, particularly at higher resolutions, is a weak point of their design. And typically show steeper performance drop off as the AA and resolution goes higher then ATi cards under similar conditions would.

For the sake of fair and balanced testing, the true maximum settings should be run on the game, as a typical user would select from the start. Not cherry picking AA levels that are favorable to one vendors hardware setup that greatly skew expected real world performance.

If you are going to bother with the new WQHD resolutions, the least you could do is run 8x FSAA.